I’m toying with a macro for method overloading support for static extensions.

First, let me say, I’m not advocating Haxe should support overloaded methods natively (there is an evolution proposal). And Haxe is already pretty flexible with function args via a OneOf abstract (though as an enum argument, it incurs runtime overhead and is not easy to use at runtime.)

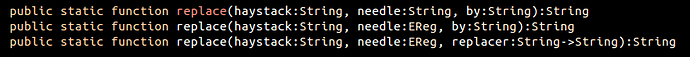

But for some flavors of API, I simply prefer overloaded methods, as it takes up less space in my brain. Take, for example, JavaScript-like String.replace signatures. It’s so nice to have all three of these…

…without having to remember where they exist in the Haxe API. e.g. these three are:

StringTools.replaceEReg.replaceEReg.map

Ugh. It’s simply less cognitive load to say String.replace is going to provide all three behaviors.

Some will say, “just learn the Haxe API.” Perhaps. But perhaps API-mapping helpers (as static extensions) could ease the transition of users from dynamic languages, like JavaScript, Ruby, etc. e.g. imagine using RubyHelper; providing a similar set of .sub and .gsub functions. Gateway drugs.

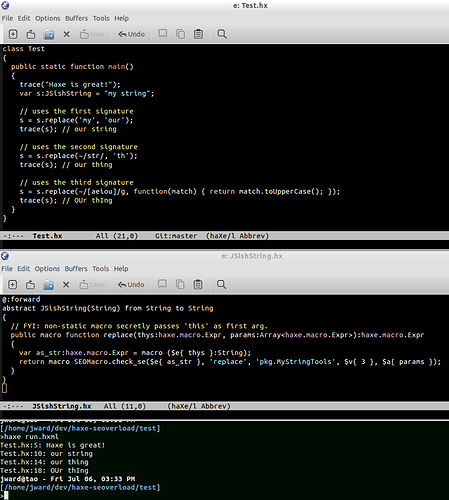

Thus, I’m toying with static extensions that support static method overloading. That is, your static extension may provide overloaded methods. The macro works by 1) renaming the overloaded methods on the library, then within the class that’s using it, 2) simply trying to type each option at each call site. If I’m thinking correctly, this plays nicely with all existing Haxe magic (abstracts, to/from casts, optional args, etc) by simply allowing the compiler to decide what works.

Bkg / aside: I’ve played with various methods of overloading for a while. One early attempt was using macro functions as the overloaded methods themselves. It wasn’t bad, but the main issue was it was ugly actually writing the overloaded methods (you needed #if macro and #else in just the right places and some messy boiler plate…)

I do like that the static extension approach makes writing overloaded methods perfectly natural. And limiting to static extensions keeps the overhead down (it scans classes for their usings, and only searches when they are implement SEMacro.Overloaded.) On my medium/large project, a global macro injection only cost starts at around 5% overhead. I’ll have to measure as I start to use it more.

It’s also haxelib package friendly: If you write an overloaded SE library, and it depends on my SEMacro library (which will include the global metadata injection), then it all should just work.

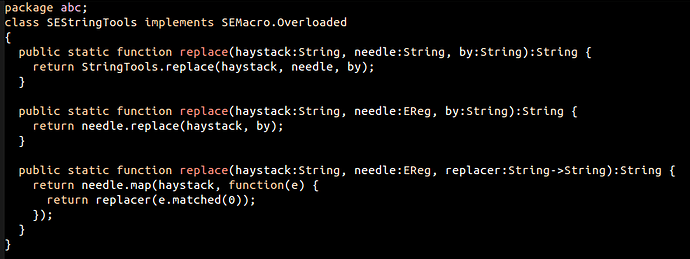

What does it look like? Here’s my overloaded String test library:

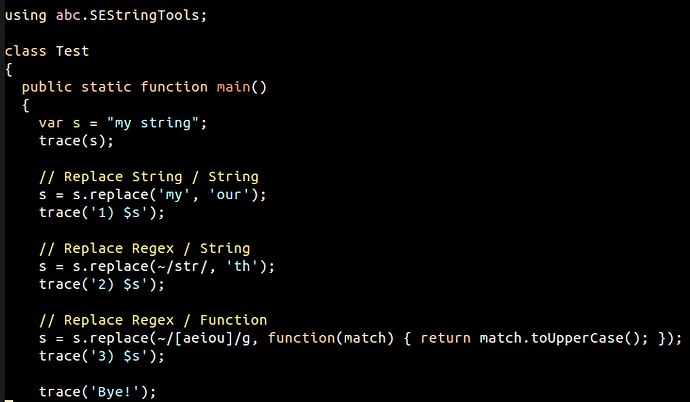

Pretty unsurprising, eh? And here’s the class that uses it:

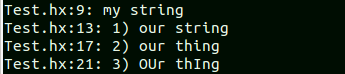

Again, pretty unsurprising. Which prints:

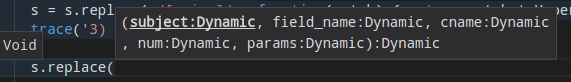

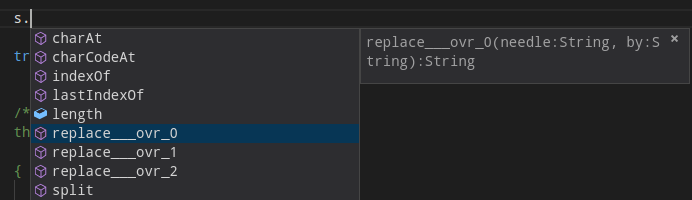

Naturally, code completion doesn’t really work… Well, kind of… All three renamed methods show up, and their completion does work normally:

But if you try to use the actual replace() function, you see a funky, unrelated SEMacro internal signature:

Clearly this approach doesn’t (yet) support runtime calls… it’d be a bit of work to support that automatically. You could allow static extension authors to write a runtime dynamic selection function. Or you could decide to only support static inline methods, nullifying the runtime issue entirely.

And of course, I’m still working out a couple details.

Seems like an interesting approach to me. Thoughts? Do you love / hate overloading? Like the static extension workflow? What other overloaded APIs / language-helpers would benefit?

And this thread. It’s not just for static extension anymore.

And this thread. It’s not just for static extension anymore.